Case Study

Case Study

Multi-Agent AI-Assisted Workflow for Frontline Managers

Multi-Agent AI-Assisted Workflow for Frontline Managers

Case Study

Multi-Agent AI-Assisted Workflow for Frontline Managers

Role

UX Design Intern

Role

UX Design Intern

Tools

Figma Miro Notion

Tools

Figma Miro Notion

Timeline

October'24-November'24

Timeline

October'24-November'24

Overview

Frontline managers spend a significant portion of their time answering routine employee queries (e.g., leave balances, payroll questions, policy clarifications). This repetitive burden slows response time and prevents managers from focusing on higher-value work.

This project explored how a multi-agent AI system could:

Automate routine queries

Surface complex cases

Keep managers in control of final decisions

Overview

Frontline managers spend a significant portion of their time answering routine employee queries (e.g., leave balances, payroll questions, policy clarifications). This repetitive burden slows response time and prevents managers from focusing on higher-value work.

This project explored how a multi-agent AI system could:

Automate routine queries

Surface complex cases

Keep managers in control of final decisions

What is the project about?

The goal was to design a platform where frontline managers could seamlessly manage employee requests in partnership with AI agents. The system needed to:

Handle repetitive queries using AI agents

Provide managers visibility into AI reasoning

Allow managers to intervene, edit, or override responses

Prevent incorrect or tone-deaf replies from being sent automatically

What is the project about?

The goal was to design a platform where frontline managers could seamlessly manage employee requests in partnership with AI agents. The system needed to:

Handle repetitive queries using AI agents

Provide managers visibility into AI reasoning

Allow managers to intervene, edit, or override responses

Prevent incorrect or tone-deaf replies from being sent automatically

Challenges Tackled

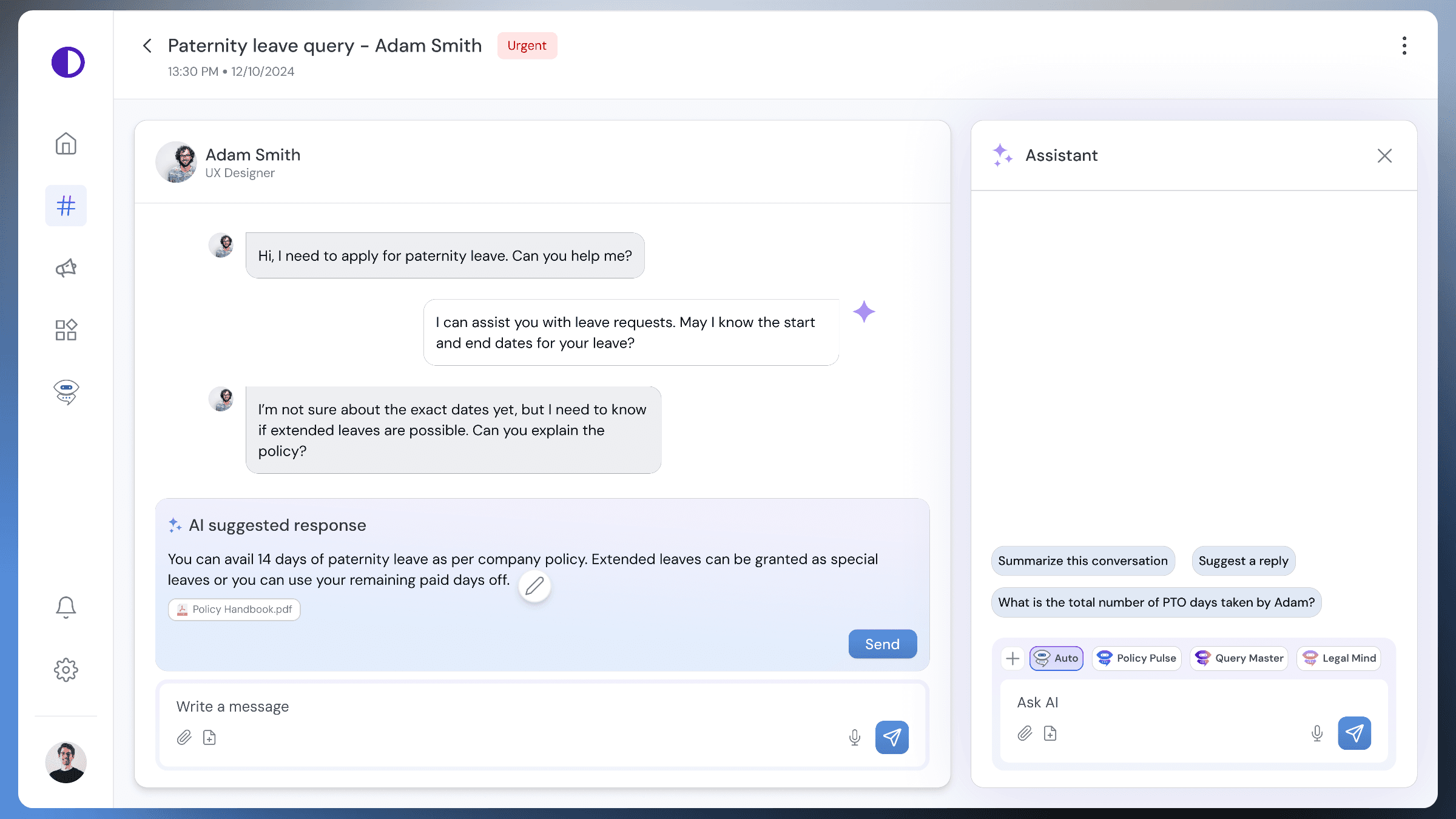

01︱Human-in-the-Loop Workflow

AI often struggles with complex or ambiguous requests, necessitating human intervention.

Solution: Designed a workflow where

AI agents handle routine queries and flag complex cases.

Managers receive clear visual cues for unresolved requests.

Managers can edit, approve, or completely rewrite responses manually or with the help of agents.

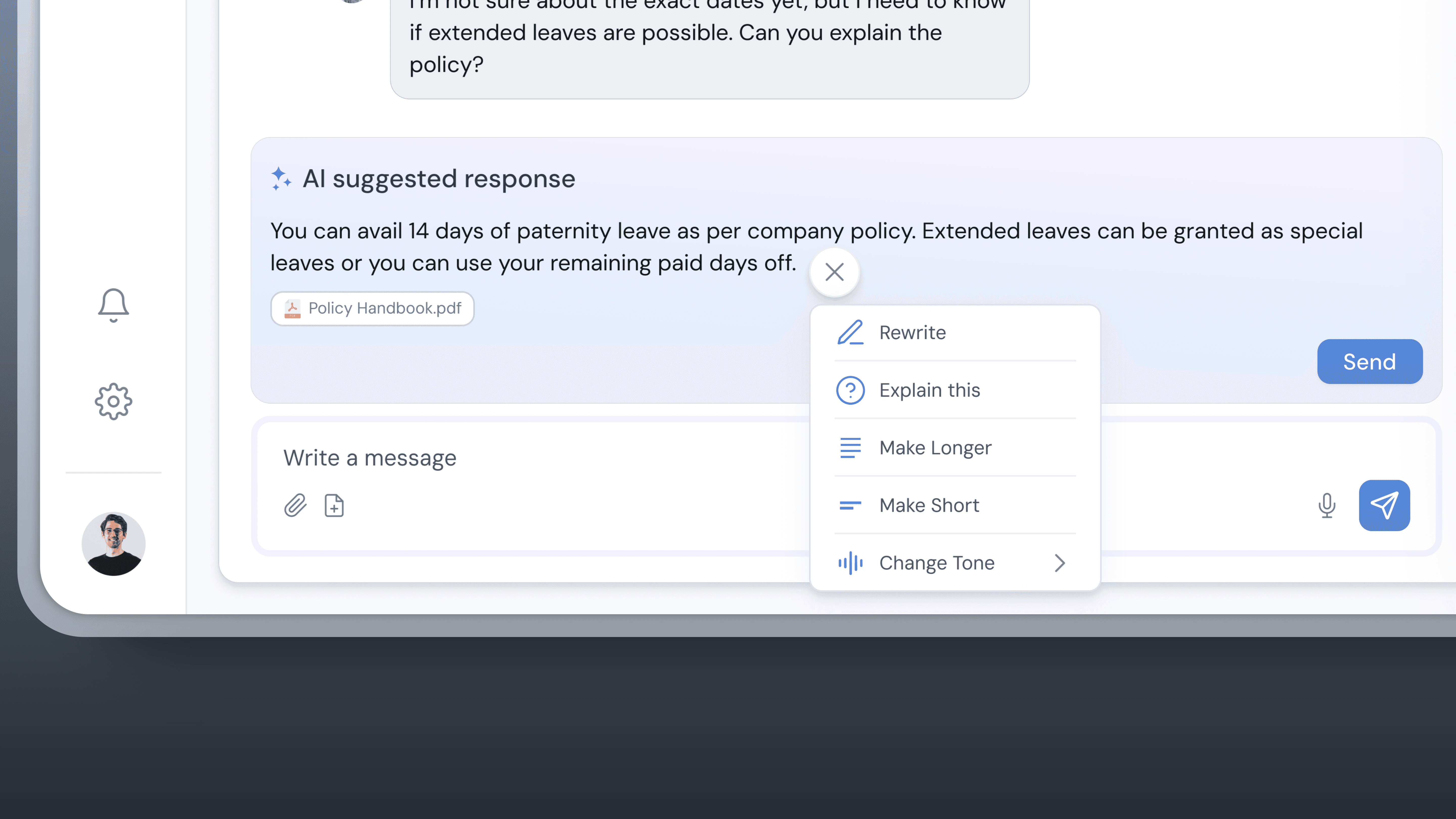

02︱Inline Edit for AI-Suggested Responses

Managers need to have the ability to tailor AI-generated responses to meet specific requirements such as tone, brevity, or factual accuracy.

Solution: Introduced an inline edit feature that allows managers to

Directly modify AI-suggested responses for clarity or precision.

Shorten responses to align with the tone of the conversation.

Quickly change the tone (e.g., professional, empathetic, or casual) for appropriate communication.

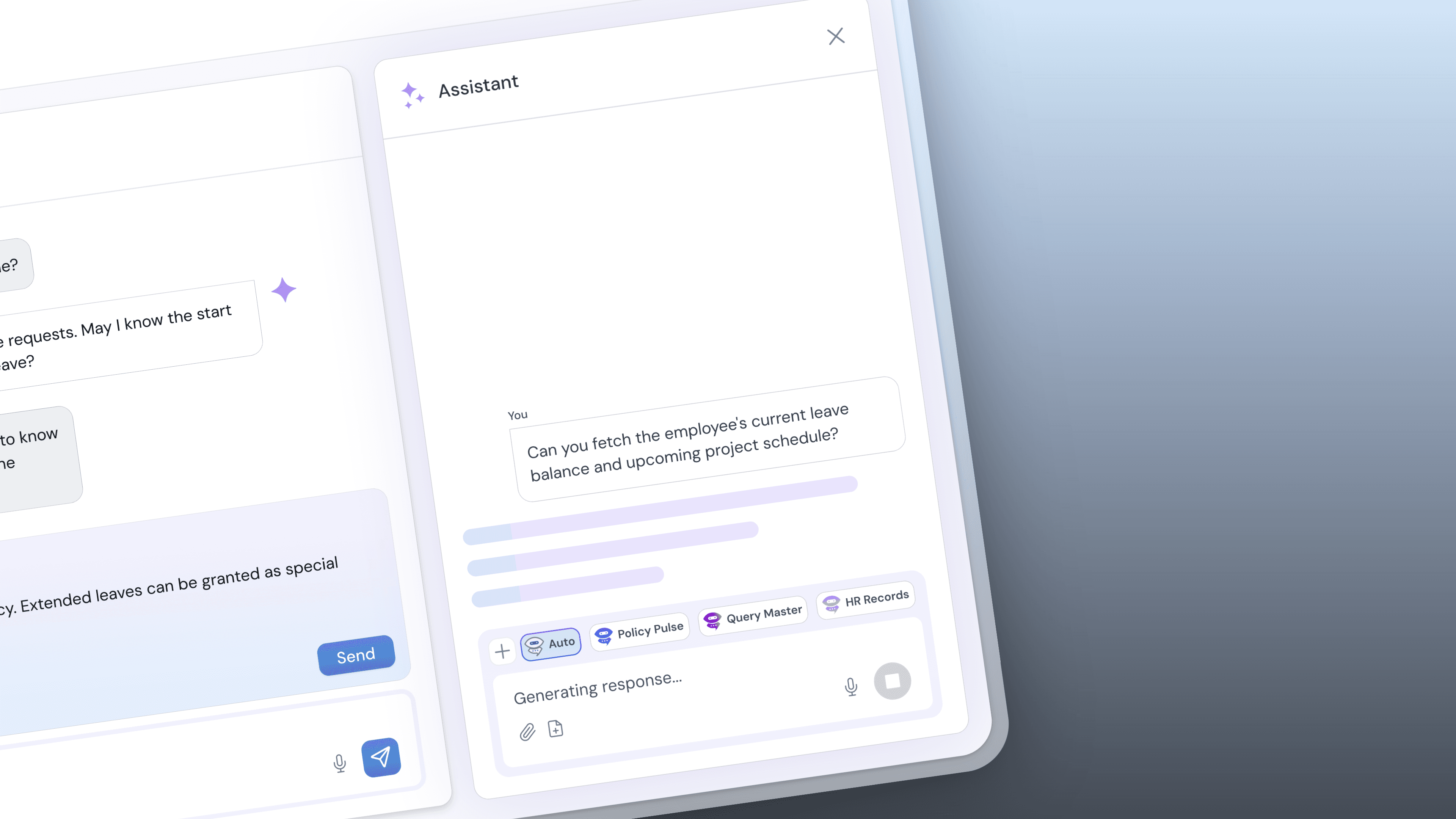

03︱AI Assistant

Managers needed a comprehensive tool to quickly understand issues and ask contextual questions about ongoing conversations between employees and AI agents.

Solution: Designed an AI assistant that

Summarizes conversations to provide concise overviews.

Allows managers to ask detailed, context-specific questions about the issue.

Provides accurate, fact-based answers with citations in collaboration with the agent responsible for the query.

Enables managers to make informed decisions without needing to sift through large amounts of data.

Challenges Tackled

01︱Human-in-the-Loop Workflow

AI often struggles with complex or ambiguous requests, necessitating human intervention.

Solution: Designed a workflow where

AI agents handle routine queries and flag complex cases.

Managers receive clear visual cues for unresolved requests.

Managers can edit, approve, or completely rewrite responses manually or with the help of agents.

02︱Inline Edit for AI-Suggested Responses

Managers need to have the ability to tailor AI-generated responses to meet specific requirements such as tone, brevity, or factual accuracy.

Solution: Introduced an inline edit feature that allows managers to

Directly modify AI-suggested responses for clarity or precision.

Shorten responses to align with the tone of the conversation.

Quickly change the tone (e.g., professional, empathetic, or casual) for appropriate communication.

03︱AI Assistant

Managers needed a comprehensive tool to quickly understand issues and ask contextual questions about ongoing conversations between employees and AI agents.

Solution: Designed an AI assistant that

Summarizes conversations to provide concise overviews.

Allows managers to ask detailed, context-specific questions about the issue.

Provides accurate, fact-based answers with citations in collaboration with the agent responsible for the query.

Enables managers to make informed decisions without needing to sift through large amounts of data.

Design Approach

Research

Our design process began with extensive stakeholder research:

Conducted in-depth interviews with frontline managers

Analyzed workflows in factory and HR management settings

Studied existing AI collaboration interfaces like ChatGPT's Canvas and Claude's Artifact feature

Gathered inspiration from tools like NotebookLM

Understanding the underlying tech

The platform integrated Retrieval-Augmented Generation (RAG) to:

Pull real-time information from internal databases

Improve factual grounding

Provide citations for transparency

Constraints & Context

The project was bounded by several real constraints:

Technical: The solution used Retrieval-Augmented Generation (RAG) — which improves factual accuracy but doesn’t resolve ambiguity.

Timebox: 6-week design sprint.

User tolerance: Managers were open to AI assistance, but verbatim autonomous responses were unacceptable due to liability and trust concerns.

Design Approach

Research

Our design process began with extensive stakeholder research:

Conducted in-depth interviews with frontline managers

Analyzed workflows in factory and HR management settings

Studied existing AI collaboration interfaces like ChatGPT's Canvas and Claude's Artifact feature

Gathered inspiration from tools like NotebookLM

Understanding the underlying tech

The platform integrated Retrieval-Augmented Generation (RAG) to:

Pull real-time information from internal databases

Improve factual grounding

Provide citations for transparency

Constraints & Context

The project was bounded by several real constraints:

Technical: The solution used Retrieval-Augmented Generation (RAG) — which improves factual accuracy but doesn’t resolve ambiguity.

Timebox: 6-week design sprint.

User tolerance: Managers were open to AI assistance, but verbatim autonomous responses were unacceptable due to liability and trust concerns.

Iterations

We drew our initial design inspiration from Claude and ChatGPT Canvas so early designs focused too heavily on automation, with managers stepping in only after failure. Later iterations re-framed the workflow around empowered oversight.

Added side panels with AI rationale to support decision-making.

Iteratively tested interaction points to ensure managers felt in control, not bypassed.

Iterations

We drew our initial design inspiration from Claude and ChatGPT Canvas so early designs focused too heavily on automation, with managers stepping in only after failure. Later iterations re-framed the workflow around empowered oversight.

Added side panels with AI rationale to support decision-making.

Iteratively tested interaction points to ensure managers felt in control, not bypassed.

Outcomes and Potential Impact

Projected outcomes included:

Reduced response times for routine queries

Improved manager efficiency

Increased consistency in communication

Higher trust in AI-assisted workflows

Success would be evaluated using:

Average response turnaround time

Escalation rate of queries

Manager satisfaction and trust feedback

Outcomes and Potential Impact

Projected outcomes included:

Reduced response times for routine queries

Improved manager efficiency

Increased consistency in communication

Higher trust in AI-assisted workflows

Success would be evaluated using:

Average response turnaround time

Escalation rate of queries

Manager satisfaction and trust feedback

Reflections

This project reinforced that designing AI systems is less about maximizing automation and more about structuring responsibility.

Three key learnings:

Human oversight increases adoption. Managers are more willing to use AI when they retain control.

Explainability supports trust. Rationale visibility reduced hesitation in approval.

Iterative Collaboration. Continuous feedback loops from users and engineers refined the design for real-world scenarios.

By centering managers as the human-in-the-loop, this project highlights how AI can augment human capabilities while ensuring critical decisions remain in human hands.

Reflections

This project reinforced that designing AI systems is less about maximizing automation and more about structuring responsibility.

Three key learnings:

Human oversight increases adoption. Managers are more willing to use AI when they retain control.

Explainability supports trust. Rationale visibility reduced hesitation in approval.

Iterative Collaboration. Continuous feedback loops from users and engineers refined the design for real-world scenarios.

By centering managers as the human-in-the-loop, this project highlights how AI can augment human capabilities while ensuring critical decisions remain in human hands.

Contact

Let's start creating together

Copy Email

Copied

Contact

Let's start creating together

Copy Email

Copied

Contact

Let's start creating together

Copy Email

Copied